You slip on your VR headset, and suddenly you’re standing on a mountain peak, exploring ancient ruins, or battling robots in space. But how do VR goggles work to create such convincing alternate realities? The magic happens through a sophisticated interplay of displays, sensors, lenses, and computing power working together at lightning speed. Modern VR headsets create the illusion of being somewhere else by precisely tracking your movements and adjusting what you see within milliseconds—fast enough to trick your brain into believing the virtual world is real. Understanding how VR goggles work reveals why some experiences feel immersive while others cause discomfort, and what technological breakthroughs are making virtual reality increasingly indistinguishable from our physical world.

When you put on a VR headset, your brain receives visual and auditory cues that conflict with what your body senses. Well-designed VR systems minimize this conflict through precise engineering. The key components must work in perfect harmony: displays showing slightly different images to each eye, lenses focusing those images across your field of view, sensors tracking your movements dozens of times per second, and powerful processors updating the virtual world before your brain detects any lag. This article breaks down exactly how VR goggles work, revealing the engineering marvel that transforms flat screens into three-dimensional worlds you can explore with natural head movements.

Why Your VR Headset Creates Depth with Dual Display Screens

VR goggles work by exploiting how human vision naturally perceives depth through binocular disparity. Your eyes are spaced about 50-75mm apart, so each sees the world from a slightly different angle. High-resolution displays inside your VR headset present two distinct 2D images—one for each eye—that match this natural separation. When your brain processes these two offset images, it reconstructs the three-dimensional characteristics of the scene, making virtual objects appear at different distances with realistic volume.

Modern VR headsets use either OLED or LCD display technology, each with distinct advantages. OLED displays provide superior contrast with true black levels since each pixel emits its own light and can turn off completely. This creates more vibrant colors and deeper blacks, though sometimes at the cost of slightly lower pixel density. LCD panels, the most common type, filter white backlight through color filters to deliver high resolution and color accuracy—critical for VR where fine detail matters. Fast-switching LCD technology has emerged as a game-changer, allowing pixels to change state in milliseconds to reduce motion blur during rapid head movements.

The resolution you experience depends on the specific headset model. Most consumer devices offer between 2K and 4K resolution per eye, with refresh rates ranging from 80Hz to 144Hz. Higher refresh rates reduce motion blur and latency, while the field of view (FOV) typically spans 90-110 degrees horizontally in most headsets. Specialized models push this boundary further, with some offering up to 200 degrees for greater peripheral immersion that makes the virtual environment feel more complete and less like looking through “goggles.”

How VR Lenses Trick Your Eyes Into Seeing 3D Worlds

Fresnel lenses are the unsung heroes inside your VR goggles, working to transform small flat displays into expansive virtual environments. These specialized lenses use concentric rings with stepped profiles to achieve a wide field of view while remaining remarkably thin and lightweight compared to traditional optics. When you look through VR lenses, they take the flat screen image and wrap it around your vision, filling your entire field of view with the virtual scene. The Meta Quest series, for example, uses hybrid Fresnel lenses that reduce the “god-ray” artifacts common in pure Fresnel designs while maintaining optical quality.

Your interpupillary distance (IPD)—the space between your pupils—must align with the headset’s optical centers for clear, comfortable vision. Most VR goggles work best when this distance matches your natural IPD, which typically ranges from 58mm to 72mm. Without proper adjustment, you might experience double vision or eye strain. Modern headsets address this with either continuous adjustment knobs or fixed presets—turn the dial until virtual objects snap into sharp focus with no ghosting. This simple adjustment is why some users find certain headsets uncomfortable while others work perfectly for them.

Software correction plays a crucial role in delivering distortion-free visuals. Advanced algorithms compensate for optical imperfections inherent in VR lenses through radial distortion correction and chromatic aberration processing. These corrections happen in real-time, ensuring straight lines appear straight and colors remain consistent across your entire field of view. Without this invisible software layer, the virtual environment would appear warped and unnatural, breaking the illusion that makes VR so compelling.

Inside-Out Tracking: Why Your Movements Translate Perfectly to VR

When you move your head in VR, the virtual world moves with you seamlessly—that’s inside-out tracking at work. Modern VR goggles work by using multiple cameras mounted directly on the headset to continuously scan and map your physical environment. The Meta Quest 2, for instance, employs a four-camera array that tracks unique visual features in your room. As you move your head, these cameras detect how these reference points shift across their field of view, allowing the system to calculate your precise position and orientation in real space.

This camera-based tracking works in concert with another critical component: the Inertial Measurement Unit (IMU). Hidden inside your VR headset, this sophisticated sensor contains accelerometers, gyroscopes, and sometimes magnetometers that measure your head’s rotation and movement at an astonishing 1000Hz. While the cameras provide positional data, the IMU delivers immediate updates on rotational movement—when you quickly turn your head, the IMU ensures the virtual world responds instantly before the cameras can fully process the new position.

The magic happens through sensor fusion algorithms that combine data from multiple sources into a single, accurate representation of your movements. Kalman filters and complementary filters process this information to create smooth, low-latency tracking with six degrees of freedom (6DOF)—meaning the system tracks both rotational movement (pitch, yaw, roll) and positional movement (forward/backward, up/down, left/right). This comprehensive tracking is why you can lean in to examine virtual objects closely or duck behind cover in a game, with the virtual world responding exactly as the physical world would.

Why Low Latency Is Non-Negotiable for Comfortable VR Experiences

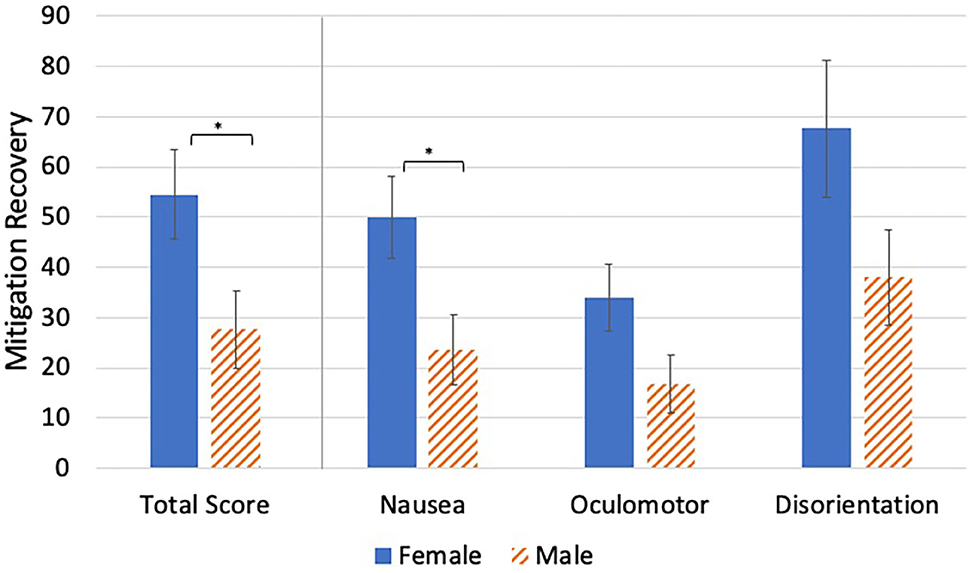

The difference between immersive VR and motion sickness often comes down to a single critical metric: motion-to-photon latency. This is the time between your physical movement and when the updated image appears on the display. For VR to feel natural and comfortable, this latency must stay below 20 milliseconds—faster than a single frame at 90Hz. When latency exceeds this threshold, your visual system detects a disconnect between what your eyes see and what your inner ear senses, triggering nausea and breaking immersion.

VR goggles work around processing limitations through clever techniques like asynchronous timewarp and reprojection. When your system struggles to render a full frame in time, these algorithms use the latest head-tracking data to “warp” the previous frame, creating a smooth transitional image while the system catches up. This happens so quickly you never notice the trick—your brain perceives continuous, responsive movement even when the graphics processor is momentarily overloaded.

Advanced headsets employ foveated rendering to maintain performance without sacrificing visual quality. By using eye-tracking technology to determine exactly where you’re looking, the system renders only that central area in high detail while reducing quality in your peripheral vision. Your brain doesn’t notice the difference because peripheral vision has lower resolution naturally. This technique dramatically reduces the GPU load while maintaining the perception of sharp, detailed visuals—making high-fidelity VR possible on standalone headsets without requiring a powerful gaming PC.

Standalone vs. PC-Powered VR: Processing Power Differences Explained

When you’re choosing VR goggles, understanding how they process information reveals why some systems deliver more immersive experiences than others. Standalone headsets like the Meta Quest series contain everything needed for VR in a single unit—powered by specialized Qualcomm XR2 chipsets that integrate CPU, GPU, and AI processing. This System on a Chip (SoC) approach gives you complete freedom to move without cables, but with some graphical compromises compared to PC-powered systems.

PC-tethered VR headsets like the Valve Index or HTC Vive rely on external computing power to generate more complex virtual environments. These systems connect via cable (or increasingly, wirelessly through technologies like Wi-Fi 6E) to a gaming PC that handles the heavy graphical lifting. The result is significantly more detailed textures, realistic physics, and complex environments that standalone headsets can’t yet match. However, this comes with trade-offs in mobility and setup complexity—your VR experience becomes limited by the length of your cable or wireless signal strength.

The processing demands of VR explain why latency reduction techniques are so critical. Whether your VR goggles work through an onboard chip or external PC, every component in the pipeline—from sensor input to displayed image—must operate with minimal delay. Modern systems achieve this through fixed foveated rendering (using eye tracking to focus processing power where you’re looking), motion prediction algorithms that anticipate your next movement, and optimized data pipelines that prioritize visual updates over less critical processes. These innovations ensure that even when processing power is limited, the essential elements for immersion—low latency and responsive tracking—remain intact.

Future Tech: Eye Tracking and Varifocal Displays Changing VR

Next-generation VR goggles work with eye-tracking technology that’s transforming both performance and realism. Advanced systems like those in the Varjo XR-3 use infrared sensors to precisely monitor your gaze direction, enabling true foveated rendering where only the area you’re directly looking at receives high-resolution processing. But beyond performance gains, eye tracking creates more natural social interactions in VR—your virtual avatar can now make realistic eye contact, conveying subtle emotional cues that were previously impossible.

Varifocal displays represent perhaps the most significant upcoming breakthrough in how VR goggles work. Current headsets focus images at a fixed distance (typically 1-2 meters), forcing your eyes to constantly refocus when looking at virtual objects at different distances—a major cause of eye strain during extended sessions. Experimental systems like Meta’s Half Dome prototype use dynamic lenses that physically adjust their focal plane based on where you’re looking, mimicking how your eyes naturally focus in the real world. This technology could finally eliminate the “vergence-accommodation conflict” that has limited VR comfort.

Emerging haptic feedback systems are making virtual objects feel increasingly tangible. While current VR controllers offer basic vibration, next-generation gloves and suits provide directional force feedback that simulates texture, resistance, and even temperature. When combined with visual and audio cues, these systems create the illusion of physically interacting with virtual environments—feeling the recoil of a virtual gun, the texture of a virtual surface, or the impact of a virtual punch. As these technologies mature, the distinction between what’s real and virtual will continue to blur, bringing us closer to the ultimate promise of virtual reality: a completely convincing alternate world you can see, hear, and feel.